这就是你需要的人脸特征点检测方法

人脸特征点检测是人脸检测过程中的一个重要环节。以往我们采用的方法是OpenCV或者Dlib,虽然Dlib优于OpenCV,但是检测出的68个点并没有覆盖额头区域。Reddit一位网友便在此基础上做了进一步研究,能够检测出81个面部特征点,使得准确度有所提高。

或许,这就是你需要的人脸特征点检测方法。

人脸特征点检测(Facial landmark detection)是人脸检测过程中的一个重要环节。是在人脸检测的基础上进行的,对人脸上的特征点例如嘴角、眼角等进行定位。

近日,Reddit一位网友po出一个帖子,表示想与社区同胞们分享自己的一点研究成果:

其主要的工作就是在人脸检测Dlib库68个特征点的基础上,增加了13个特征点(共81个),使得头部检测和图像操作更加精确。

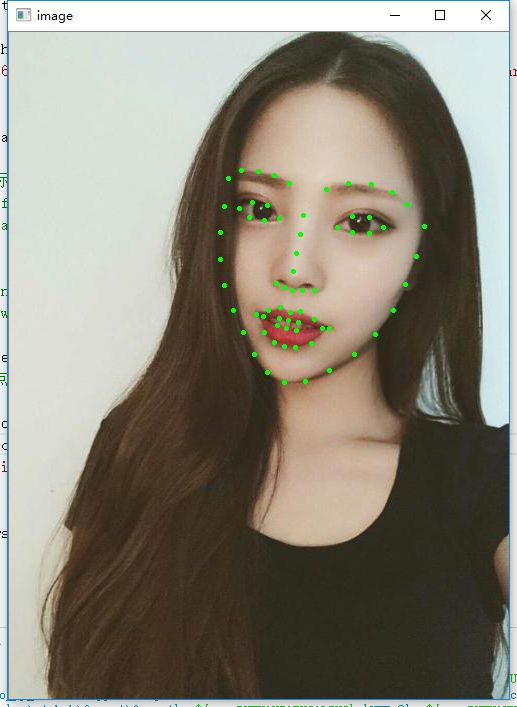

现在来看一下demo:

demo视频链接:

https://www.youtube.com/watch?v=mDJrASIB1T0

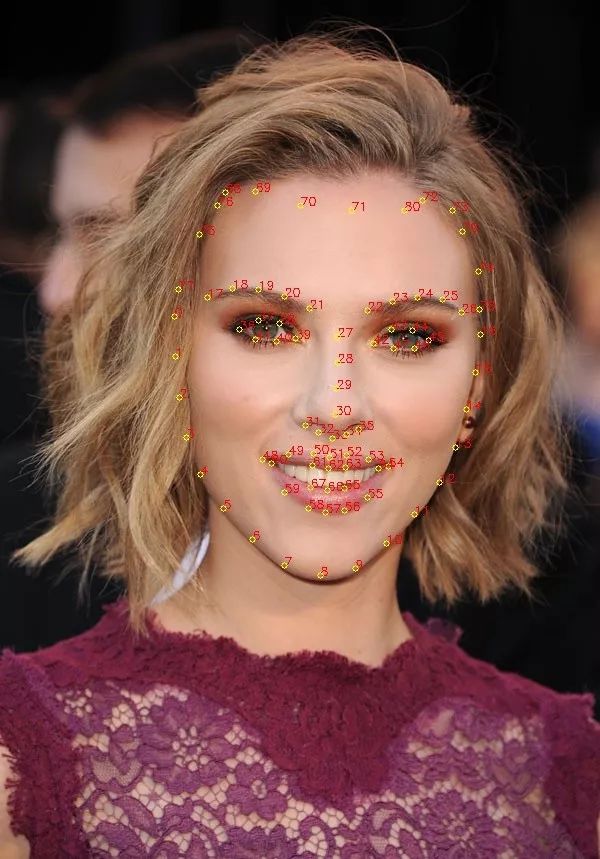

81个特征点,人脸特征点检测更加精准

以往我们在做人脸特征点检测的时候,通常会用OpenCV来进行操作。

但自从人脸检测Dlib库问世,网友们纷纷表示:好用!Dlib≥OpenCV!Dlib具有更多的人脸识别模型,可以检测脸部68甚至更多的特征点。

我们来看一下Dlib的效果:

Dlib人脸特征点检测效果图

那么这68个特征点又是如何分布的呢?请看下面这张“面相图”:

人脸68个特征点分布

但无论是效果图和“面相图”,我们都可以发现在额头区域是没有分布特征点的。

于是,网友便提出了一个特征点能够覆盖额头区域的模型。

该模型是一个自定义形状预测模型,在经过训练后,可以找到任何给定图像中的81个面部特征点。

它的训练方法类似于Dlib的68个面部特征点形状预测器。只是在原有的68个特征点的基础上,在额头区域增加了13个点。这就使得头部的检测,以及用于需要沿着头部顶部的点的图像操作更加精准。

81个特征点效果图

这13个额外的特征点提取的方法,是根据该博主之前的工作完成的。

GitHub地址:

https://github.com/codeniko/eos

该博主继续使用Surrey Face Model,并记下了他认为适合他工作的13个点,并做了一些细节的修改。

当然,博主还慷慨的分享了训练的代码:

1#!/usr/bin/python 2# The contents of this file are in the public domain. See LICENSE_FOR_EXAMPLE_PROGRAMS.txt 3# 4# This example program shows how to use dlib's implementation of the paper: 5# One Millisecond Face Alignment with an Ensemble of Regression Trees by 6# Vahid Kazemi and Josephine Sullivan, CVPR 2014 7# 8# In particular, we will train a face landmarking model based on a small 9# dataset and then evaluate it. If you want to visualize the output of the 10# trained model on some images then you can run the 11# face_landmark_detection.py example program with predictor.dat as the input 12# model. 13# 14# It should also be noted that this kind of model, while often used for face 15# landmarking, is quite general and can be used for a variety of shape 16# prediction tasks. But here we demonstrate it only on a simple face 17# landmarking task. 18# 19# COMPILING/INSTALLING THE DLIB PYTHON INTERFACE 20# You can install dlib using the command: 21# pip install dlib 22# 23# Alternatively, if you want to compile dlib yourself then go into the dlib 24# root folder and run: 25# python setup.py install 26# 27# Compiling dlib should work on any operating system so long as you have 28# CMake installed. On Ubuntu, this can be done easily by running the 29# command: 30# sudo apt-get install cmake 31# 32# Also note that this example requires Numpy which can be installed 33# via the command: 34# pip install numpy 35 36import os 37import sys 38import glob 39 40import dlib 41 42# In this example we are going to train a face detector based on the small 43# faces dataset in the examples/faces directory. This means you need to supply 44# the path to this faces folder as a command line argument so we will know 45# where it is. 46if len(sys.argv) != 2: 47 print( 48 "Give the path to the examples/faces directory as the argument to this " 49 "program. For example, if you are in the python_examples folder then " 50 "execute this program by running:\n" 51 " ./train_shape_predictor.py ../examples/faces") 52 exit() 53faces_folder = sys.argv[1] 54 55options = dlib.shape_predictor_training_options() 56# Now make the object responsible for training the model. 57# This algorithm has a bunch of parameters you can mess with. The 58# documentation for the shape_predictor_trainer explains all of them. 59# You should also read Kazemi's paper which explains all the parameters 60# in great detail. However, here I'm just setting three of them 61# differently than their default values. I'm doing this because we 62# have a very small dataset. In particular, setting the oversampling 63# to a high amount (300) effectively boosts the training set size, so 64# that helps this example. 65options.oversampling_amount = 300 66# I'm also reducing the capacity of the model by explicitly increasing 67# the regularization (making nu smaller) and by using trees with 68# smaller depths. 69options.nu = 0.05 70options.tree_depth = 2 71options.be_verbose = True 72 73# dlib.train_shape_predictor() does the actual training. It will save the 74# final predictor to predictor.dat. The input is an XML file that lists the 75# images in the training dataset and also contains the positions of the face 76# parts. 77training_xml_path = os.path.join(faces_folder, "training_with_face_landmarks.xml") 78dlib.train_shape_predictor(training_xml_path, "predictor.dat", options) 79 80# Now that we have a model we can test it. dlib.test_shape_predictor() 81# measures the average distance between a face landmark output by the 82# shape_predictor and where it should be according to the truth data. 83print("\nTraining accuracy: {}".format( 84 dlib.test_shape_predictor(training_xml_path, "predictor.dat"))) 85# The real test is to see how well it does on data it wasn't trained on. We 86# trained it on a very small dataset so the accuracy is not extremely high, but 87# it's still doing quite good. Moreover, if you train it on one of the large 88# face landmarking datasets you will obtain state-of-the-art results, as shown 89# in the Kazemi paper. 90testing_xml_path = os.path.join(faces_folder, "testing_with_face_landmarks.xml") 91print("Testing accuracy: {}".format( 92 dlib.test_shape_predictor(testing_xml_path, "predictor.dat"))) 93 94# Now let's use it as you would in a normal application. First we will load it 95# from disk. We also need to load a face detector to provide the initial 96# estimate of the facial location. 97predictor = dlib.shape_predictor("predictor.dat") 98detector = dlib.get_frontal_face_detector() 99100# Now let's run the detector and shape_predictor over the images in the faces101# folder and display the results.102print("Showing detections and predictions on the images in the faces folder...")103win = dlib.image_window()104for f in glob.glob(os.path.join(faces_folder, "*.jpg")):105 print("Processing file: {}".format(f))106 img = dlib.load_rgb_image(f)107108 win.clear_overlay()109 win.set_image(img)110111 # Ask the detector to find the bounding boxes of each face. The 1 in the112 # second argument indicates that we should upsample the image 1 time. This113 # will make everything bigger and allow us to detect more faces.114 dets = detector(img, 1)115 print("Number of faces detected: {}".format(len(dets)))116 for k, d in enumerate(dets):117 print("Detection {}: Left: {} Top: {} Right: {} Bottom: {}".format(118 k, d.left(), d.top(), d.right(), d.bottom()))119 # Get the landmarks/parts for the face in box d.120 shape = predictor(img, d)121 print("Part 0: {}, Part 1: {} ...".format(shape.part(0),122 shape.part(1)))123 # Draw the face landmarks on the screen.124 win.add_overlay(shape)125126 win.add_overlay(dets)127 dlib.hit_enter_to_continue()

有需要的小伙伴们,快来试试这个模型吧!

原文标题:超越Dlib!81个特征点覆盖全脸,面部特征点检测更精准(附代码)

文章出处:【微信号:AI_era,微信公众号:新智元】欢迎添加关注!文章转载请注明出处。